[](https://arxiv.org/abs/2412.21037) [](https://huggingface.co/declare-lab/TangoFlux) [](https://tangoflux.github.io/) [](https://huggingface.co/spaces/declare-lab/TangoFlux) [](https://huggingface.co/datasets/declare-lab/CRPO)

## Overall Pipeline

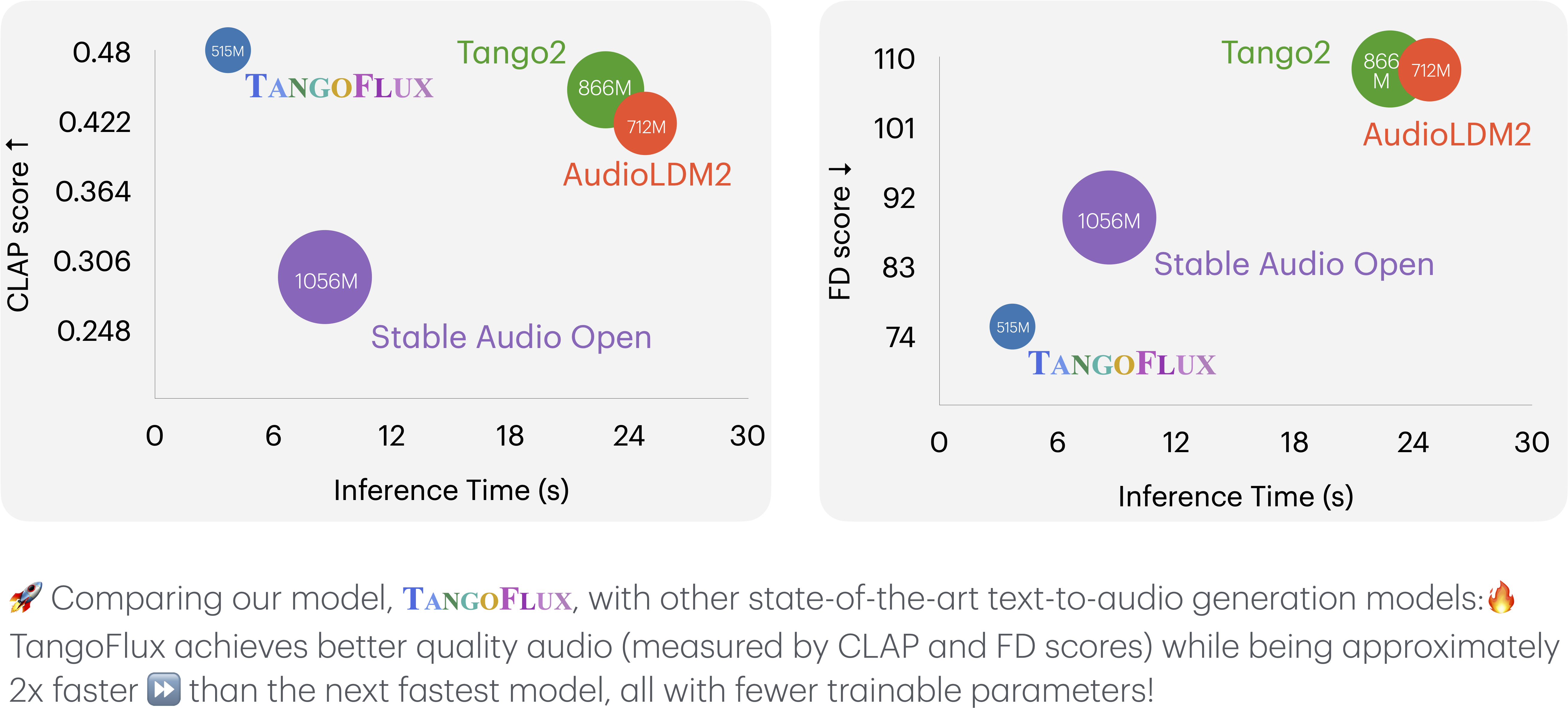

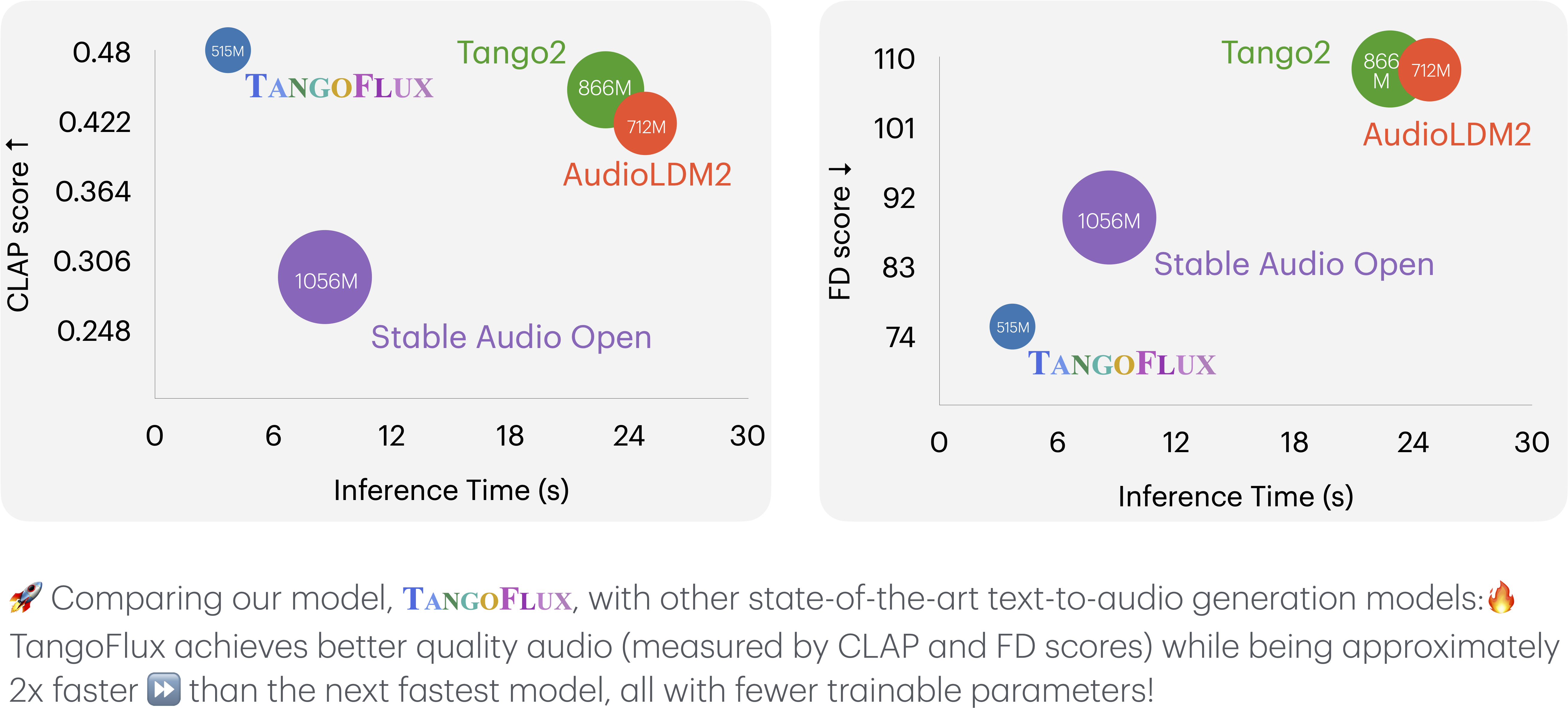

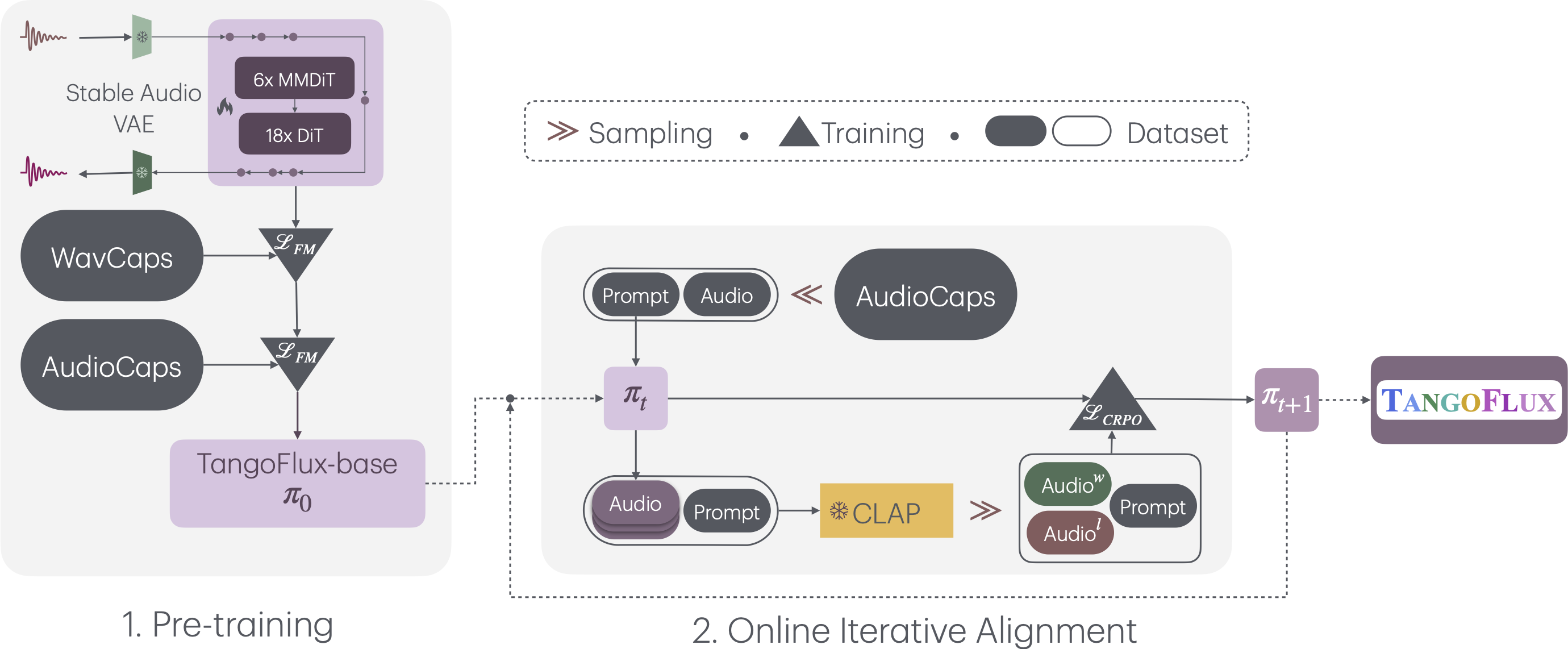

TangoFlux consists of FluxTransformer blocks which are Diffusion Transformer (DiT) and Multimodal Diffusion Transformer (MMDiT), conditioned on textual prompt and duration embedding to generate audio at 44.1kHz up to 30 seconds. TangoFlux learns a rectified flow trajectory from audio latent representation encoded by a variational autoencoder (VAE). The TangoFlux training pipeline consists of three stages: pre-training, fine-tuning, and preference optimization. TangoFlux is aligned via CRPO which iteratively generates new synthetic data and constructs preference pairs to perform preference optimization.

## Quickstart

## Training TangoFlux

## Inference with TangoFlux

Download the TangoFlux model and generate audio from a text prompt:

TangoFlux can generate audio up to 30seconds through passing in a duration variable in model.generate function.

```python

import torchaudio

from tangoflux import TangoFluxInference

from IPython.display import Audio

model = TangoFluxInference(name='declare-lab/TangoFlux')

audio = model.generate('Hammer slowly hitting the wooden table', steps=50, duration=10)

Audio(data=audio, rate=44100)

```

Our evaluation shows that inferencing with 50 steps yield the best results, which takes about 3seconds. For faster inference, consider setting steps to 25 that yield similar audio quality.

## Evaluation Scripts

## Comparison Between TangoFlux and Other Audio Generation Models

This comparison evaluates TangoFlux and other audio generation models across various metrics. Key metrics include:

- **Output Length**: Represents the duration of the generated audio.

- **FD**